[ad_1]

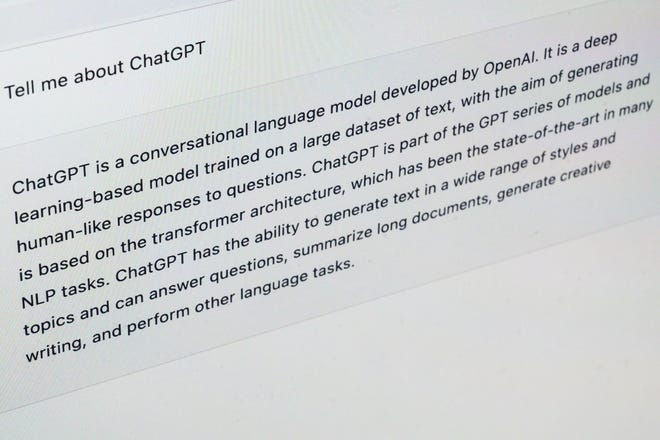

ChatGPT’s striking ability to answer people’s questions in instantly generated, easy-to-understand answers could be a game-changer for people searching for medical advice, but a new medical journal essay says it’s not quite that simple. And so does ChatGPT.

Peter Lee, a vice president at Microsoft, has spent the last six months experimenting with the latest edition of ChatGPT, an artificial intelligence platform designed to answer user questions in a conversational tone.

Like other so-called large language models, ChatGPT, made by San Francisco-based startup OpenAI, is trained on vast amounts of data available on the internet, in books and from other sources. It uses algorithms to generate words in sequence based on the frequency with which they appear together online. Microsoft’s Bing search engine now uses ChatGPT.

Lee, a computer scientist who runs Microsoft research around the world, is particularly interested in the medical and scientific potential of ChatGPT.

PREVIOUSLY:ChatGPT is poised to upend medical information. For better and worse.

He published an essay Thursday in The New England Journal of Medicine about the potential uses of ChatGPT for recording medical notes, offering diagnostic possibilities, educating clinicians and patients and analyzing and summarizing data. Particularly notable, he said, was the interface’s ability to provide empathetic, human-like responses.

We asked Lee, also co-author of the forthcoming book “The AI Revolution in Medicine: GPT-4 and Beyond,” about his enthusiasm and concerns about the latest version of ChatGPT, now ChatGPT-4, which was released this month. We also posed the same questions to ChatGPT, but because it’s availability is still limited, the responses are from ChatGPT-3.

Question: Does ChatGPT provide reliable medical information?

Lee: I personally would not trust models at that level of sophistication for my own personal health advice or information and I would be concerned if doctors and nurses were making it for medical decision-making.

ChatGPT: ChatGPT is a language model trained on vast amounts of text data, including medical information. However, it’s important to note that ChatGPT is not a medical professional, and its responses should not be considered as medical advice.

While ChatGPT may provide information on medical topics, it’s essential to verify that information with a qualified medical professional before making any decisions regarding your health. There is also a risk that ChatGPT may provide inaccurate or outdated medical information, which could potentially be harmful.

Question: What kinds of mistakes does ChatGPT commonly make and why?

Lee: GPT models generally are very bad at information retrieval. It’s almost by design because the GPT models don’t want to regurgitate their training data. If you’re asking it for a specific source, like a reference to a paper, it’s not able to resurrect the exact source, so it makes something up, it ‘hallucinates.’

ChatGPT: ChatGPT may make mistakes such as providing irrelevant or incorrect responses, repeating itself, or producing responses that are inconsistent with the context of the conversation.

These mistakes can occur because ChatGPT is trained on vast amounts of text data, including unverified and potentially biased information, which can lead to incorrect or outdated information.

Additionally, ChatGPT’s responses may be affected by the quality of the input it receives, which can result in irrelevant or off-topic responses.

Question: How is ChatGPT-4 better than ChatGPT-3?

Lee: We get to a different level of intelligence. The fundamental thing is you have this AI model that has a lot of cognitive capability and you have a chat interface. It ends up being really natural to interact with.

ChatGPT: It’s reasonable to expect improvements in areas such as scale, accuracy, multilingual capabilities, contextual understanding, and consistency.

Question: ChatGPT sometimes sounds like a real person. Does ChatGPT have a ‘theory of mind,’ the capacity to imagine the mental states of others?

Lee: It can’t possibly do this. It’s a machine. But you can ask GPT-4 what would the patient described (in a particular situation) be thinking and feeling. It’s able to assess that state of mind. … It translates into a sense of empathy for the patients.

ChatGPT: No, ChatGPT does not have a theory of mind.

ChatGPT is a machine learning model that uses statistical patterns and algorithms to generate responses to natural language inputs. It does not have consciousness, emotions, or beliefs, and it does not possess the capacity to understand or infer the mental states of human users.

While ChatGPT is capable of generating responses that may be empathetic, engaging, and conversational, it does not possess a theory of mind, which is a crucial aspect of human cognition and social interaction.

Question: Should ChatGPT be used in medicine?

Lee: Society has a choice to make. We can try to slow down or shut down this technology, because we don’t understand the risks completely. A second argument is more fatalist, saying: ‘it’s unstoppable. We might as well give it a try.’ A third choice, which I think is the only rational one, but it has to be made intentionally, is to think hard about how to integrate this technology into our lives so that we can be accomplishing things that neither humans alone nor AI alone can do. I’m hoping the medical community and the public will choose that third option.

ChatGPT: ChatGPT should not be solely relied upon for medical advice or diagnosis. While ChatGPT may be able to provide information on medical topics, it is not a medical professional, and its responses should be considered as informational only.

However, ChatGPT can be a helpful tool for gaining a general understanding of medical concepts and terminology. In any case, it’s essential to verify any information provided by ChatGPT with a qualified medical professional to ensure that it’s accurate and applicable to your situation.

Contact Karen Weintraub at kweintraub@usatoday.com.

Health and patient safety coverage at USA TODAY is made possible in part by a grant from the Masimo Foundation for Ethics, Innovation and Competition in Healthcare. The Masimo Foundation does not provide editorial input.

[ad_2]

Source link